- Blog

- Unwanted programs running on startup windows

- Binary editor download

- File deduplication software windows 10

- Sheepshaver raw keycodes windib

- How to turn on avast antivirus in windows 8

- Spongebob season 3 full episodes

- Apple new imac release date 2018

- Murgee auto clicker cracked

- Distraction free text editor for windows

- Hangouts on mac messages

- Do ezdrummer midi packs ever go on sale

- Usa apple support phone number

- Mac air battery

- #File deduplication software windows 10 install#

- #File deduplication software windows 10 full size#

- #File deduplication software windows 10 manual#

- #File deduplication software windows 10 upgrade#

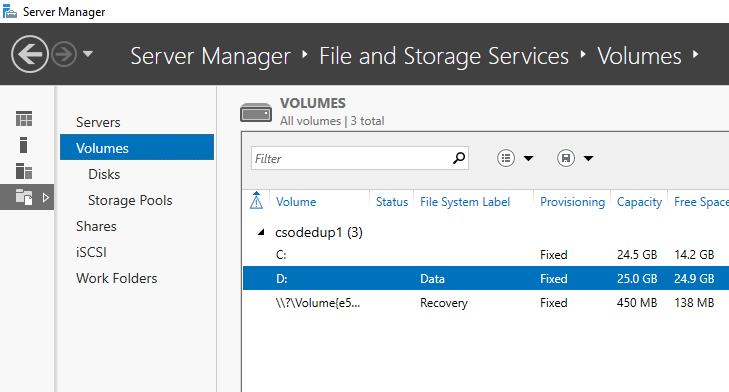

You can either do this from the Server Manager GUI by expanding the 'File and Storage Services' role and then selecting the 'Data Deduplication' component from within the 'File and iSCSI Services' container or you can use this Powershell command:

#File deduplication software windows 10 install#

Microsoft provides a tool that you can use to check the savings that deduplication might provide, but to use it you need to install the Data Deduplication component. Data Deduplication supports this new mixed-mode cluster configuration to enable full data access during a cluster rolling upgrade.

#File deduplication software windows 10 upgrade#

Starting with the Windows Server 2016, the cluster rolling upgrade functionality allows a cluster to run in a mixed-mode, that is, the Windows OS versions are not required to be identical now.

#File deduplication software windows 10 manual#

The Data Deduplication process now runs multiple threads in parallel using multiple I/O queues for each volume, so speeding up the post-processing operations.ĭata Deduplication is fully supported on Nano Server, and the configuration for Virtualized Backup Applications is simplified, as the requirement for manual tuning of the deduplication settings has been replaced by a predefined Usage Type option. Volume sizes above 10 TB were not considered good candidates for deduplication, the very large files that are typical of backup processes were not good candidates and the Data Deduplication process used a single-thread and I/O queue for each volume.ĭata Deduplication in Windows Server 2016 supports volume sizes up to 64 TB and files up to 1TB. RAID protection will help avoid this risk, and off-disk backups are essential.ĭata Deduplication was introduced in Windows Server 2012 R2, but had some limitations. The downside of deduplication is that if a file chunk is lost, then this can cause several files to be corrupted, including file backups held elsewhere on disk. Deduplication also has its own cache, so if a file is requested repeatedly, it will not be necessary to reconstitute the file for every request. The references (or reparse points) to the deduplicated chunks are deleted, and a garbage collection job runs later to reclaim the data from obsolete chunks.ĭata deduplication is transparent to the end user, the path to the deduplicated file remains the same and if he requests a deduplicated file he should retrieve it as exactly the same manner as if deduplication was not enabled.ĭeduplication will try to keep the deduplicated chunks together on disk, so sequential access should not be affected. When its size reaches about 1 GB, that container file is sealed and a new container file is created.ĭeduplicated data chunks are not deleted immediatley when a file is deleted. Chunks are stored in container files and new chunks are appended to the current chunk store container. This setting is configurable by the user and can be set to '0' to process files regardless of how old they are. Deduplication has a setting called MinimumFileAgeDays that controls how old a file should be before processing the file.

#File deduplication software windows 10 full size#

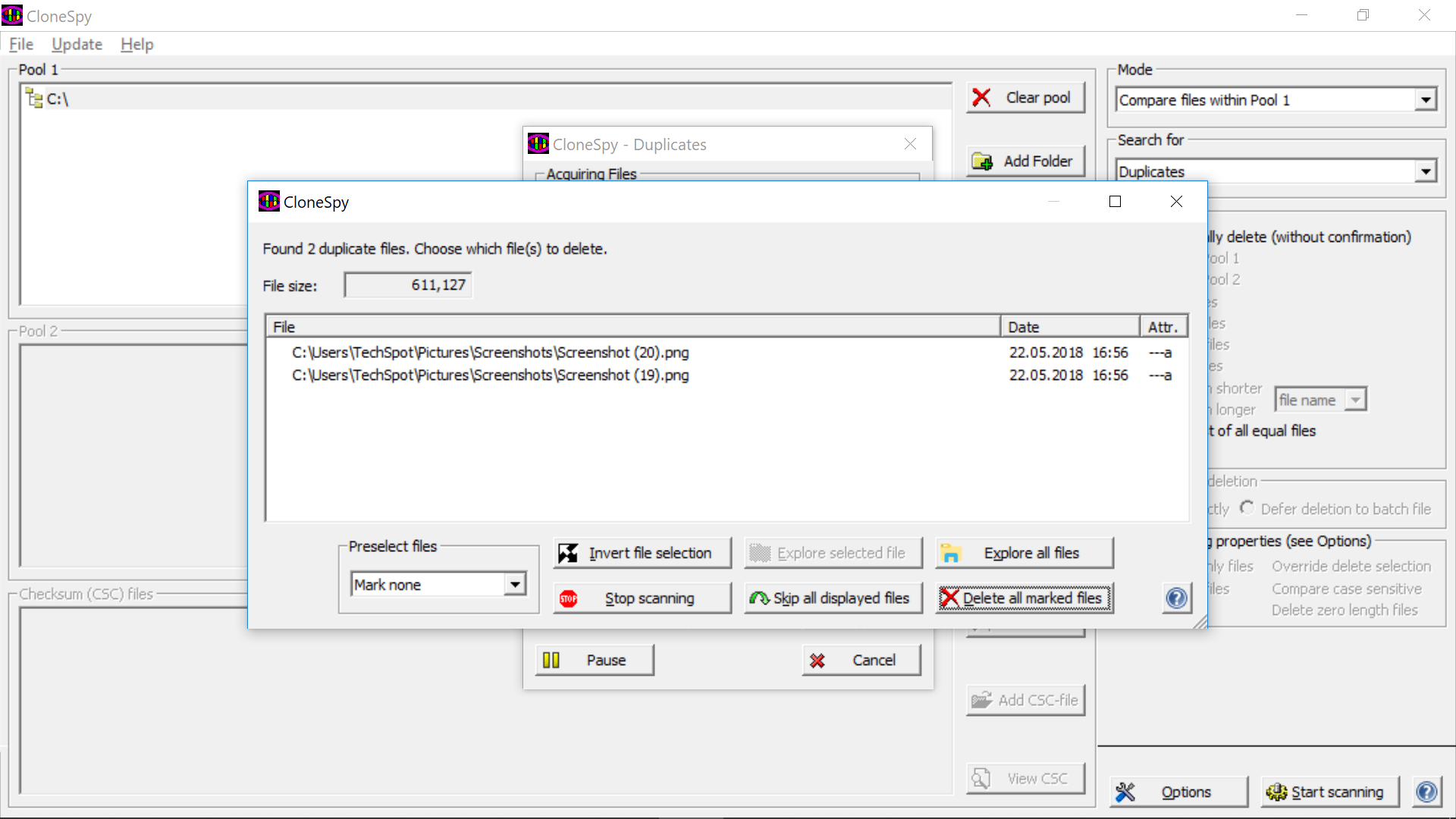

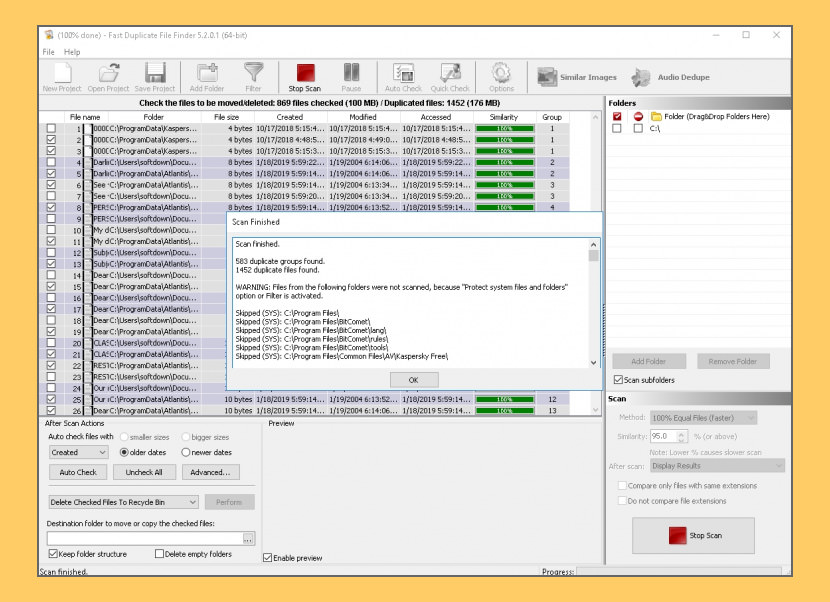

Windows Deduplication is post-process, so files are initally created full size and are not deduplicated at once, but are retained full size for a minimum amount of time before they are processed. If the chunk is a duplicate, then it is replaced with a reference so that only a single copy of each chunk is stored. Data deduplication works by splitting data up into small variable-sized chunks then comparing these chunks with existing chunks to identify duplicates.

Compression works on individual files, Deduplication happens at block level and eliminates duplicate copies of repeating data, which includes duplicate files or duplicate data within several files. Hardware dedup runs on the storage array, so that deduplication is not consuming CPU cycles on your server.ĭata deduplication is an extension of compression. It is advisable to check to see if your storage hardware supports hardware level deduplication before considering the Windows version.